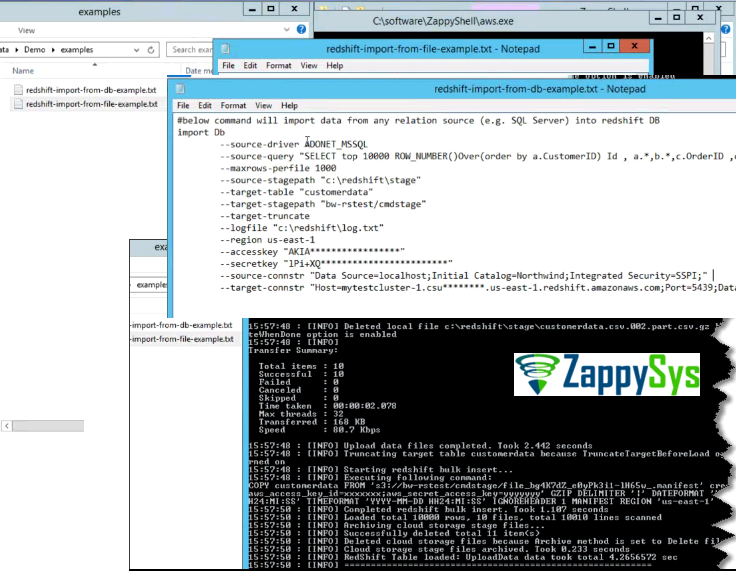

The COPY command appends the new input data to any existing rows in the target table. You can take maximum advantage of parallel processing by splitting your data into multiple files, in cases where the files are compressed. That said, 20,000 rows is such a small amount of data in Redshift terms I'm not sure any further optimisations would make much significant difference to the speed of your process as it stands currently. The COPY command reads and loads data in parallel from a file or multiple files in an S3 bucket. this is so all nodes are doing maximum work in parallel. That last point is significant for achieving maximum throughput - if you have 8 nodes then you want n*8 files e.g. The number of files should be a multiple of the number of slices in your.For optimum parallelism, the ideal size is between 1 MB and 125 MB after compression. Solution 2.2: First COPY all the underlying tables, and then CREATE VIEW on Redshift. Load data files should be split so that the files are about equal size,īetween 1 MB and 1 GB after compression. Solution 2.1: Create views as a table and then COPY, if you don’t care its either a view or a table on Redshift.Serialized load, which is much slower than a parallel load. Loading data from a single file forces Redshift to perform a.Regarding the number of files and loading data in parallel, the recommendations are: You should also read through the recommendations in the Load Data - Best Practices guide: Is there any source or calculator to give an approximate performance metrics of data loading into Redshift tables based on number of columns and rows so that I can decide whether to go ahead with splitting files even before moving to Redshift. Is it really worthy to split every file into multiple files and load them parallelly? I've gone through Īt this point, I'm not sure how long it's gonna take for 1 file to load into 1 Redshift table. I wanted to improve the performance even more.

Maximum of 20000 rows for every iteration for 20 tables. With the current system that I have, each CSV file may contain a maximum of 1000 rows which should be dumped into tables. And for next iteration, new 20 CSV files will be created and dumped into Redshift. I'm now creating 20 CSV files for loading data into 20 tables wherein for every iteration, the 20 created files will be loaded into 20 tables. I have verified that the data is correct in S3, but the COPY command. during the copy command and is now too long for the 20 characters. The import is failing because a VARCHAR (20) value contains an which is being translated into. I want to upload the files to S3 and use the COPY command to load the data into multiple tables.įor every such iteration, I need to load the data into around 20 tables. We have a file in S3 that is loaded in to Redshift via the COPY command. I'm working on an application wherein I'll be loading data into Redshift.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed